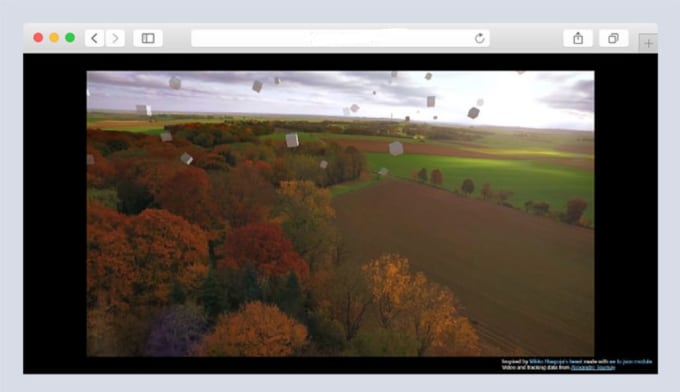

If so, it will not update the colour of that pixel, since the object being drawn should be occluded by the already drawn pixel. When drawing a new object in WebGL, for each pixel on the screen that an object occupies, WebGL checks in the depth buffer to see whether the last thing drawn on that pixel is closer to the camera than the current object. The brightness of the depth buffer linearly maps to the distance from the camera, where full white and full black are determined by the far and near planes in the perspective matrix. Instead of interpreting the pixel values in the depth buffer as colours, you read them as depth. You can draw things in any order you want and it will still still show closer objects in front (well, unless you tell it not to.) It does this by recording the depth of every pixel in a depth buffer, which is like a monochrome image the same size as the main canvas. WebGL works a little differently, however. This is called the painter's algorithm and we just did this earlier when we sorted pixels by their depth. This way, closer objects have the ability to draw overtop of the previously drawn farther objects. If you were drawing a scene in a 2D canvas, you manage depth by drawing the farthest objects first. If we're rendering a 3D scene via WebGL, it actually does have the depth information stored! It isn't immediately accessible to us, but it's used internally in the rendering pipeline. It would be great if we could get true per-pixel blur. However, this uniformly blurs each object, so it can't handle single objects being partially in focus and partially out of focus. In fact, that's what I used for this sketch, playing off of the album art for Muse's Origin of Symmetry: We could render one object at a time with this method instead of one pixel at a time, and that works decently. This would work, but blurring one pixel at a time like this is slow, and we don't automatically know the depth of each pixel. Imagine our image is an array of pixels, where each one has a color, a position on the screen, and also a depth. As a fragment's distance from the focal target increases, its blur radius increases by the blur rate. If we are rendering a fragment that is exactly that distance away, its colour will be spread out over a radius of zero and it will be perfectly in focus. Every fragment that we render will be blurred, and the question is just how much. Instead, we need to treat every fragment of every object in the image as a separate object, and blur them different amounts. A photo isn't all blurred uniformly, though some things in the image are in focus and others aren't. So, we know how to uniformly blur a whole image. Drag the sliders to see how it looks with more or less copies of an image (the more copies, the closer we get to "real life" with billions of photons) and to see how it looks when spread out various amounts.īasically: with enough samples, all with random offsets, we converge on a smooth blur! This will be a guiding principle for how we implement our own blur. To simulate the effect of a bunch of photons spreading out, let's see what happens when we duplicate an image a bunch of times, all slightly transparent. Why does an image look fuzzy when the light gets spread out? It's one of those things that feels intuitive, but maybe that's just because we're so used to seeing it. Why does that make an image look fuzzy though? When all the light that originated from a single point on an object ends up focused to a single point on the film, then that point appears sharp in the image otherwise, the light from that point gets spread out and the result is a blurry image.

The lens of a camera bends light to try to focus it onto the film in the camera. So why does that happen? Two sentences about the actual optics and then we'll move on I swear Position it too close or too far from the camera and it will start to blur and go out of focus. The term "depth of field" is a term from cameras, and it refers to how much wiggle room you have when positioning an object when you want it to appear sharp in a photo. If we're going to program this effect, we should start off with an understanding of what exactly it is we're making. For that reason, I wanted to try to take a step towards something a bit more cinematic and add some depth of field blur to my 3D scenes. There are cultural reasons for this (generative art using code has its roots in conceptual art and minimalism) and technical reasons (there is a significantly higher learning curve and barrier to entry when programming for photorealism.) Lots of amazing work is made in this style! That said, a great way to inspire new ideas is to try to do the opposite of what you are used to doing.

I find a lot of generative art has a flattened, graphic design-inspired style.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed